Machine Learning in Self Driving Cars

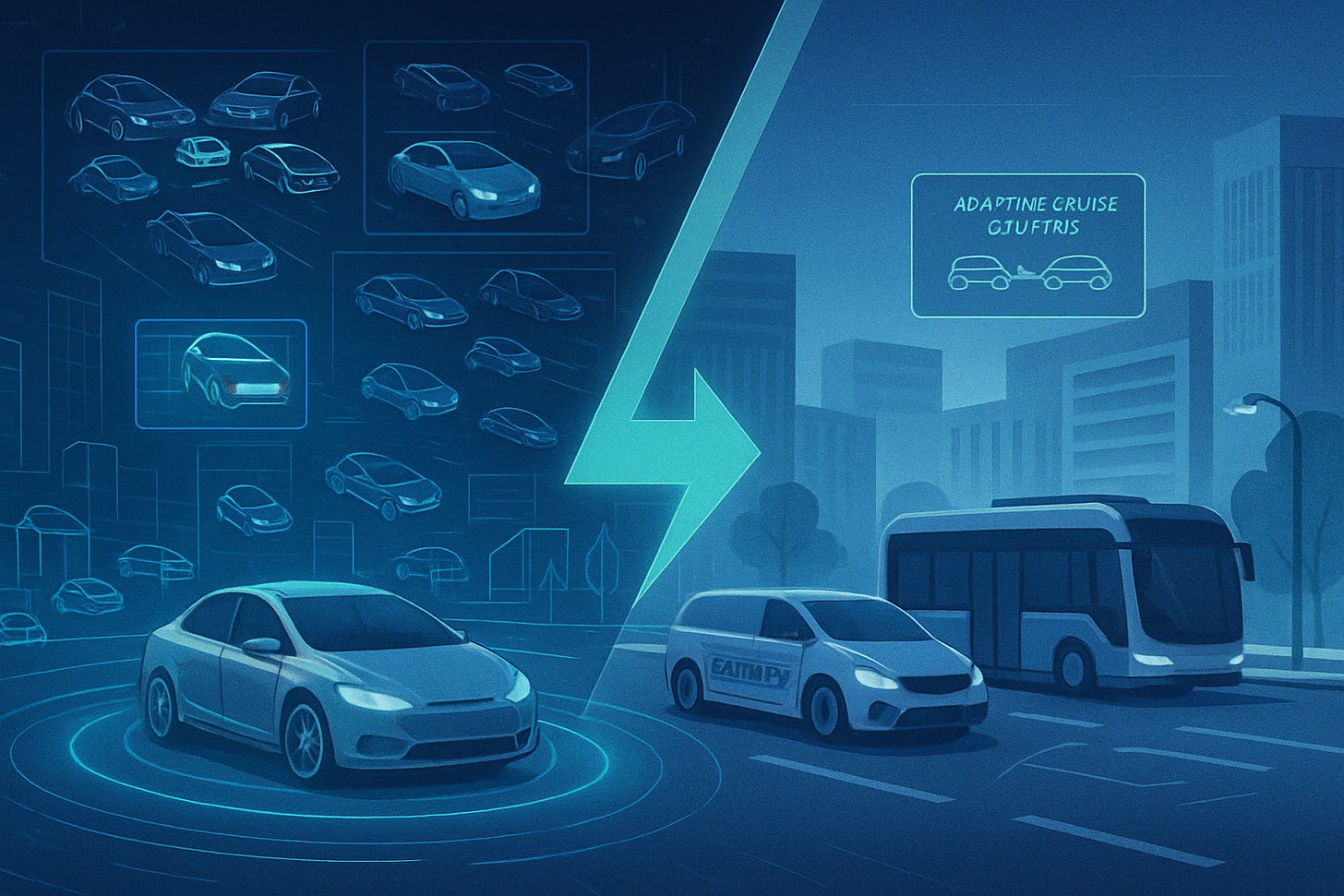

How Machine Learning Drives Self-Driving Cars

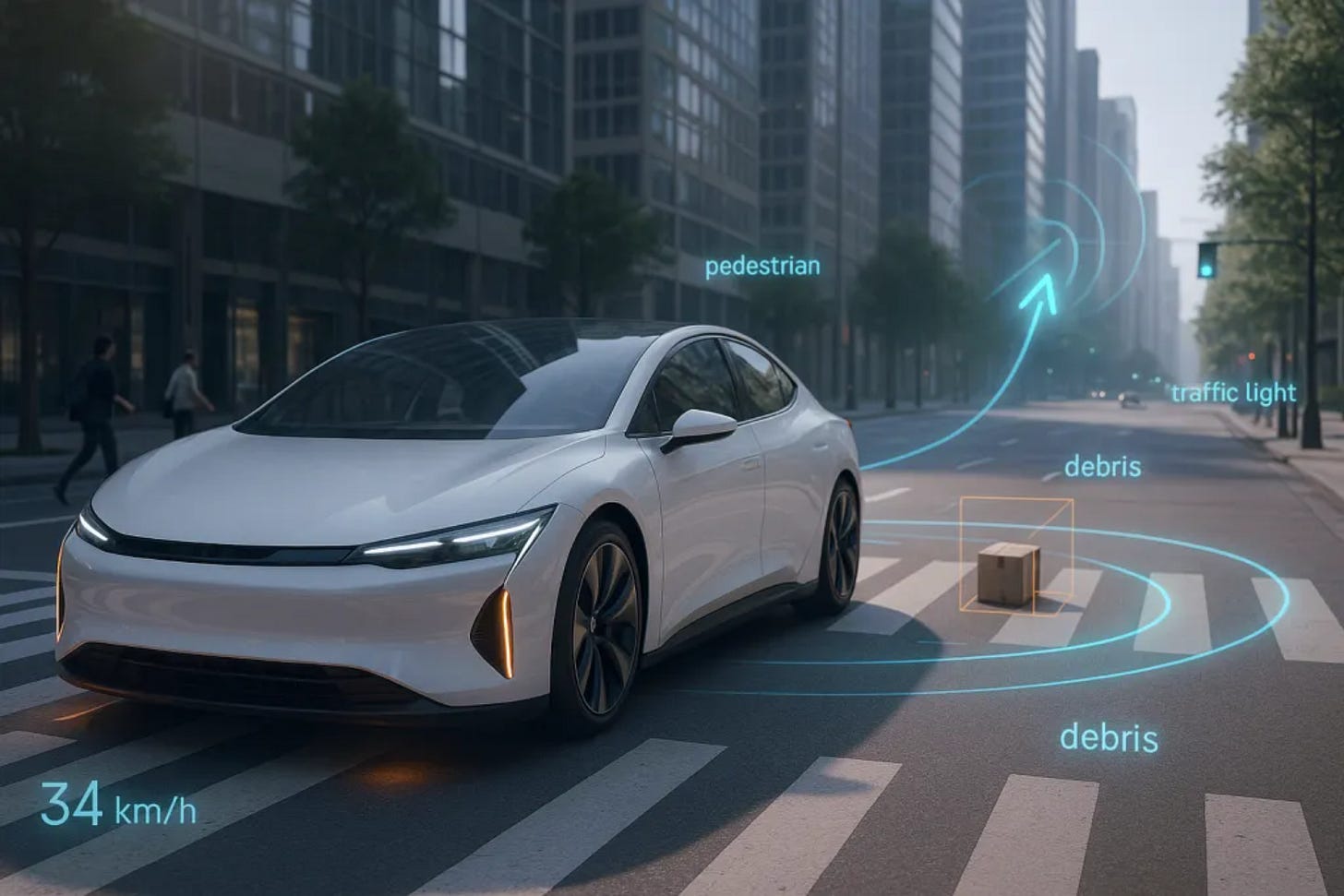

A car glides through traffic, stops at a crosswalk, swerves slightly to avoid road debris, then accelerates smoothly as the light turns green yet no one’s touching the wheel. It seems surreal. But millions of lines of code, years of road testing, and a powerful branch of artificial intelligence are making this moment possible. That branch is machine learning, and it’s quietly reshaping how vehicles move through the world. In this article we discover what a self-driving car actually is, how machine learning is applied, and what's next for these self-driving cars.

What Makes a Car “Self-Driving”?

A self-driving car, or autonomous vehicle, is designed to operate with little to no human input. It senses its environment, interprets what’s happening, and makes decisions in real time. The level of autonomy varies though as some cars handle highway driving but hand over control in cities while others are pushing towards full autonomy, with no steering wheels at all. The common factor between all of them however is their ability to make decisions by learning from data. These cars adapt through learning instead of just following pre-coded instructions.

Machine Learning: The Brain Behind the Wheel

To allow the learning to occur in cars, developers employ machine learning techniques to allow computers to identify patterns and improve at tasks without being explicitly programmed. In the context of driving, it helps cars recognize stop signs, interpret lane markings, detect pedestrians, and adjust to constantly changing road conditions such as traffic. At its core, it’s about experience. Just as a human gets better at driving by encountering more traffic scenarios, a machine learning model improves by being exposed to vast amounts of driving data (millions of images, sensor readings, and driving outcomes).

How the Car Learns

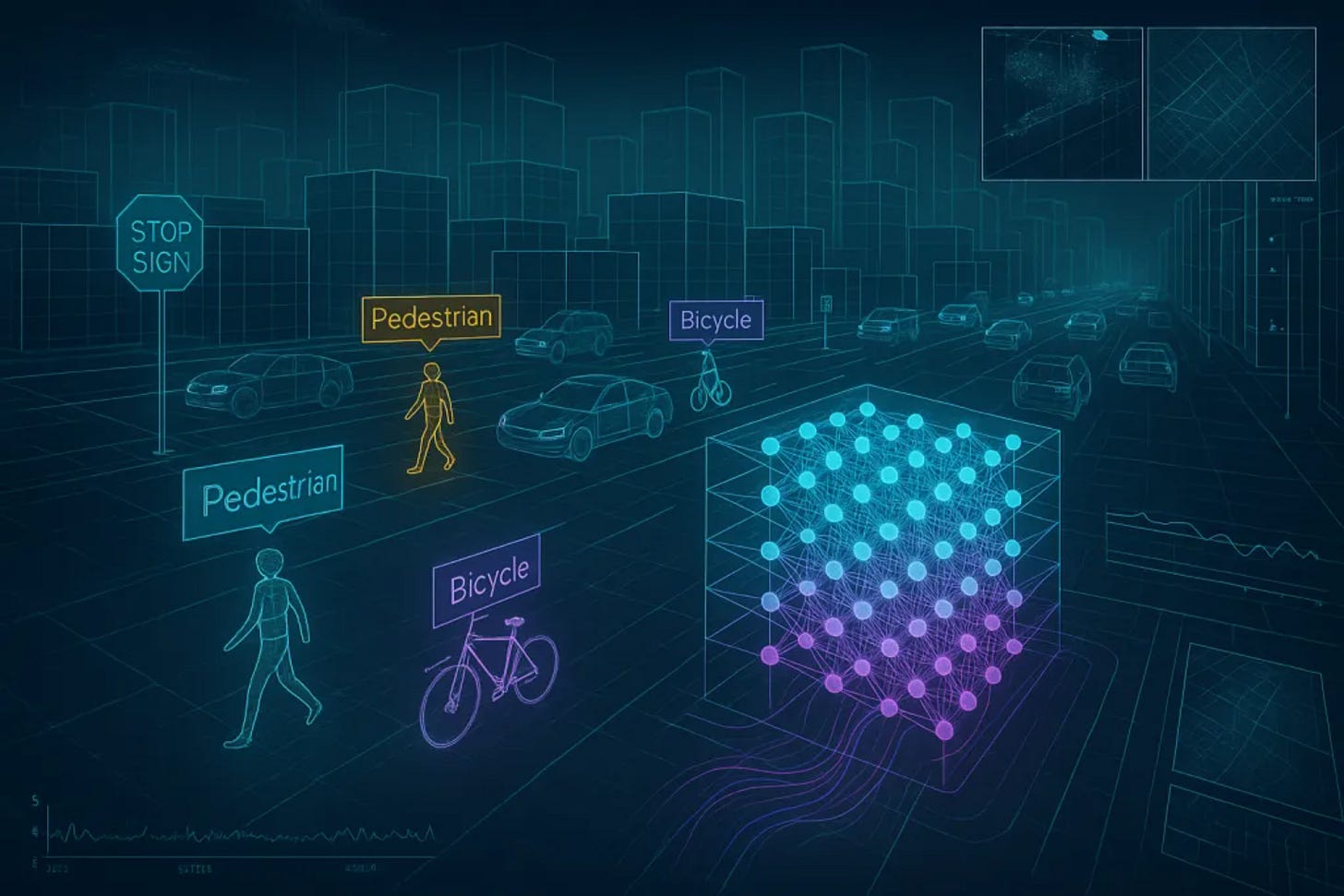

The learning process begins long before the car hits the road on its own. As mentioned before, it starts with data, hours of human driving, annotated road scenes, 3D maps, and simulated environments. These are used to train neural networks: algorithms modeled loosely after the human brain. During training, the system makes predictions (“this is a stop sign”) and gets corrected when it’s wrong. Over time, its accuracy improves, sometimes surpassing human reliability in specific tasks like lane detection or object tracking.

The car learns to:

Identify and classify objects (cars, bikes, signs, people)

Predict what those objects might do next

Decide the safest, most efficient action

These autonomous cars learn all this not from rules, but from patterns. Patterns often patterns even humans might fail to notice.

What’s Inside a Self-Driving Car?

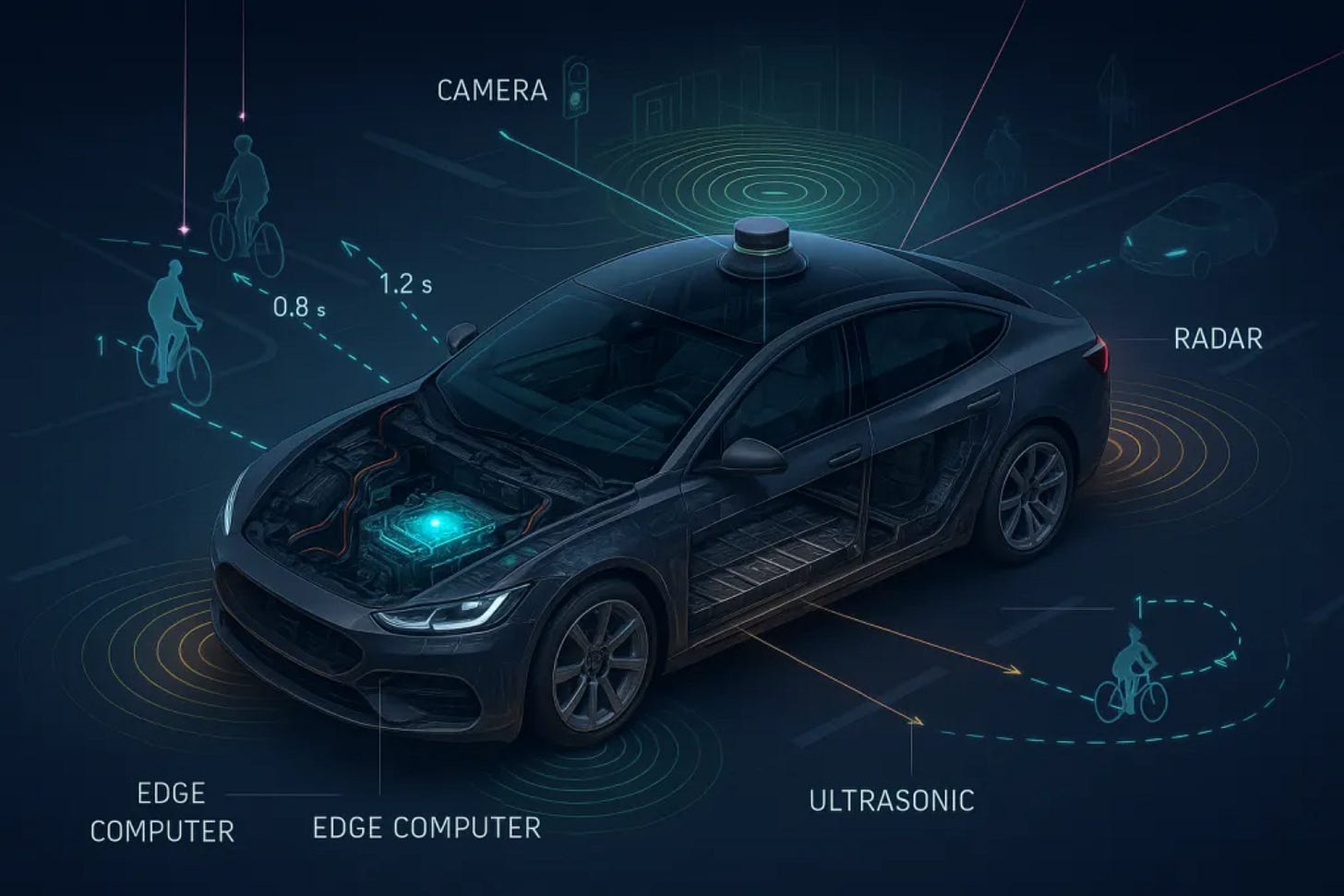

Under the hood (and roof, and bumper) are a network of sensors and processors working together:

Cameras capture visual data, like lane markings or stop lights

LIDAR (Light Detection and Ranging) scans the environment with laser pulses to build a 3D map

Radar detects the speed and distance of moving objects

Ultrasonic sensors help with short-range detection (like parking)

GPS and inertial sensors pinpoint the car’s location

Edge computers process all this in milliseconds to steer, brake, or accelerate

The result is a digital mind that doesn’t just react, but anticipates the next moves by watching not just the cars ahead, but the pedestrian about to step off the curb, the bicyclist changing lanes, or the tailgater speeding up in the mirror.

Where Things Get Complicated

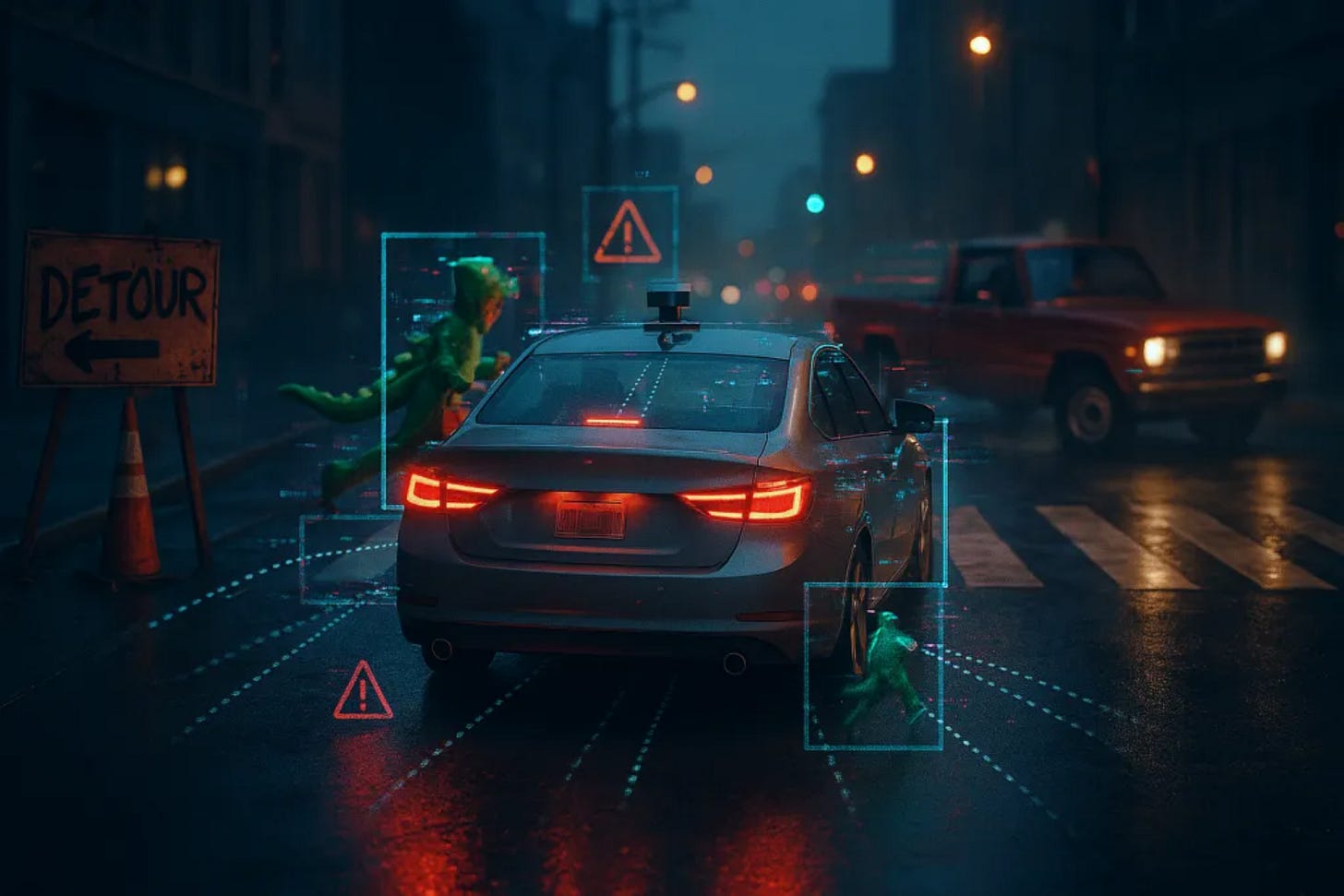

Despite remarkable progress, autonomous vehicles still face situations that challenge even the most advanced machine learning systems. Rare events, the ones not well-represented in training data, can confuse even the best models. Some such examples include a child in a costume darting into the road, a makeshift construction sign, or unexpected behavior from other drivers can trip up an AI system that hasn't “seen” that exact scenario before. Next, there is also the human factor. The rules of the road are rarely followed perfectly and it would be extremely naive to assume otherwise. These edge cases aren’t just bugs to be fixed. They’re reminders that driving involves a blend of logic, social cues, and instinct, something which is very hard for machine learning to understand and therefore is still trying to replicate.

What Comes Next?

Self-driving cars are improving rapidly, thanks to faster processors, better sensors, and smarter training methods. Simulation tools now let cars “experience” millions of driving miles virtually, accelerating their learning without the risks of real-world testing. Over the next decade, autonomous vehicles will likely become more common in delivery fleets, ride-hailing services, and public transit. Private ownership might take longer, but the core technologies such as adaptive cruise control, automatic emergency braking, lane centering, etc. are already mainstream. Bit by bit, the line between driver assistance and full autonomy is fading.

A Different Kind of Driver

Machine learning has turned driving from a manual task into a complex, evolving conversation between software and the world around it. What used to be instinct, the feel of the road, the timing of a merge, the subtle read of a pedestrian’s intent, is now being captured in algorithms, frame by frame, mile by mile. Every time a car avoids a crash, handles an unfamiliar detour, or slows down for someone texting in the crosswalk, it’s not just reacting. It’s recalling patterns from millions of past experiences. It’s not just a machine that moves. It’s a machine that learns and a learning machine has the power to do big things.